It was 2023. The industry was captivated by what AI agents could do, but many had questions about the reliability and consistency of LLMs.

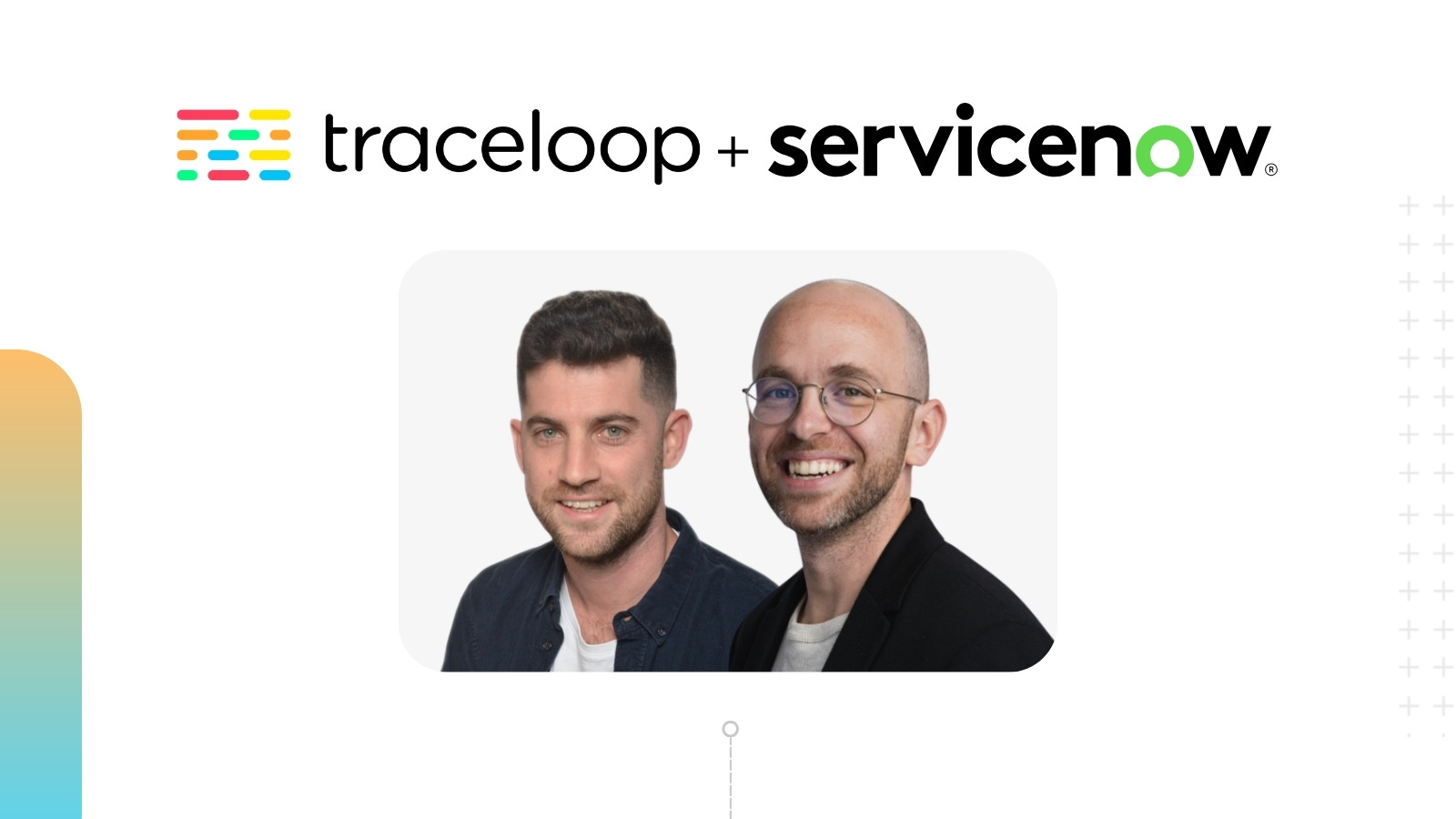

We met Nir Gazit and Gal Kleinman that year. Nir had spent four years at Google building LLM systems to predict user engagement and retention. Gal had led machine learning infrastructure at Fiverr. Both had watched the same pattern repeat: AI that performed beautifully in testing behaved unpredictably in production. Nobody had a good solution.

So they decided to build one.

The core problem was visibility. Once an AI agent leaves the benchmark lab and meets real traffic, product teams go blind. There’s no way to know whether the model that excelled in testing is hallucinating in production. No continuous integration loop. No alerts. When an AI agent misfires, users don’t complain – they simply disengage. Quietly. And the product team is left guessing why.

Nir and Gal set out to change that.

Building the Flight Data Recorder for AI

They built Traceloop as a production-grade observability and evaluation platform for AI systems. Every LLM call could be traced. Automated quality evaluations ran against real traffic. Drift could be detected before users felt it. Experiments could be run with data-backed confidence rather than intuition. For the first time, AI teams had something resembling a flight data recorder – a system that captured what happened in production, why it happened, and how to fix it.

“Prompt engineering shouldn’t be a guessing game or rely on vibes. It should be like the rest of engineering – observable, testable, and reliable.” – Nir Gazit, Co-founder & CEO

Their first move was to build in the open. OpenLLMetry – an observability framework grounded in OpenTelemetry – launched with the simple promise of one line of code, full observability. The community adopted it quickly. IBM, Microsoft, Cisco, Dynatrace, and Miro were among the first. When competitors began building on top of it, the signal was clear that OpenLLMetry had become a standard.

The numbers followed. Five hundred thousand monthly installs. Fifty thousand weekly active SDK users. Over sixty open-source contributors. Not through aggressive marketing, but because it solved a real problem cleanly.

The customer results were just as clear. At Miro, processing millions of conversations across its platform, Traceloop enabled real-world performance visibility and safe model experimentation at scale. IBM used OpenLLMetry with its Instana platform to monitor Bedrock and watsonx.ai workloads, catching performance issues before they reached users. Across the customer base, teams replaced manual prompt tweaking with automated evaluation pipelines – and iteration cycles collapsed.

What Comes Next

The market took notice.

ServiceNow saw what Traceloop had built and recognized the fit. ServiceNow’s AI Control Tower manages and governs every AI system across an organization. What it needed was exactly what Traceloop had built: the ability to see not just what AI is doing, but how well it’s doing it – in real time, across every model, agent, and workflow. As enterprises move from experimentation to mission-critical deployment, governance and observability are no longer optional. They are prerequisites for trust.

Traceloop had built that trust layer.

For us at Sorenson Capital, this outcome reflects what we believed from the very first meeting. Nir and Gal were building infrastructure, not features. Infrastructure the industry needed to deal with the challenges posed by building products with LLMs. Their ability to see clearly the approach to solve these problems was key to their success.

We’re proud to have backed this team from the beginning alongside Y Combinator, Ibex Ventures, and Grand Ventures. Congratulations to Nir, Gal, and everyone who built Traceloop! We can’t wait to see what comes next.